IPP Passport

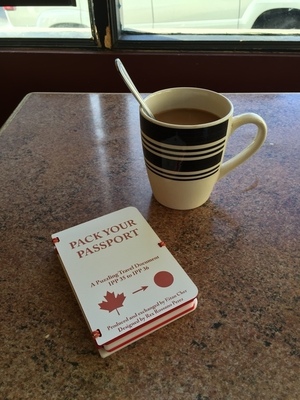

June 9, 2016Eitan Cher handed this to me at a board game night on Monday:

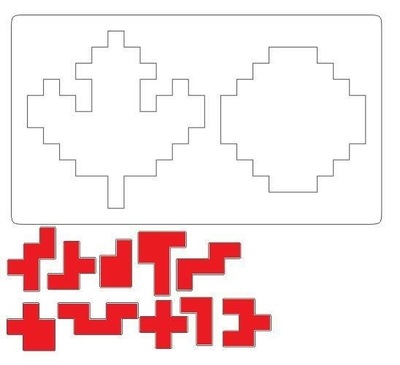

Inside were two maddening jigsaw puzzles designed by Rex Rossano Perez:

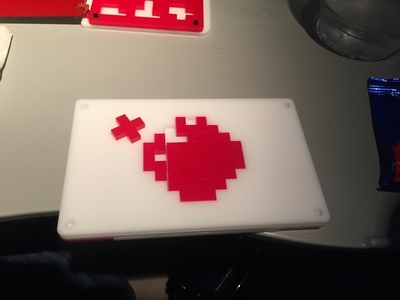

Eitan created a beautiful laser cut plastic passport out of these two patterns.

The Candian flag:

And the Japanese flag:

Almost immediately, I knew I wasn’t going to solve this puzzle by hand. During a flight to Wisconsin yesterday, I got a chance to code up a solution in Python 3 (see GitHub).

To avoid spoilers, I won’t post the solutions here, but you can find all the solutions to the maple leaf here, and all the solutions to the sun here.

My implementation was straightforward BFS with some pruning (if we ever end up in a position where we’ve created islands smaller than our smallest piece, there’s no need to keep searching).

I made no attempt to parallelize or to reduce via symmetry. Solving the maple leaf starting with the stem took 6 minutes on my laptop, and the sun took 1.6 hours.

I used this as an opportunity to try out some ideas that have been bouncing around in my head since watching this video on functional programming. I definitely want to try out a real functional programming language next.